AI Transparency Won’t Build Trust Unless It Solves the Right Problem

AI is no longer an experiment in advertising. It is becoming infrastructure. The real question now is not whether the industry will use it, but whether it can use it without weakening trust.

That challenge is getting more specific. In January 2026, the IAB released its first AI Transparency and Disclosure Framework, explicitly taking a risk-based approach rather than calling for blanket labeling. Under that framework, disclosure is most important when AI materially affects authenticity, identity, or representation in ways that could mislead consumers. Routine production assistance and clearly stylized uses do not automatically require the same treatment.

The major platforms are moving in a similar direction. Meta says it has been labeling ads created or significantly edited using its generative AI creative features and is expanding transparency around ads made or edited with non-Meta AI tools (Pavón, 2025). YouTube’s policy likewise focuses on altered or synthetic content that looks realistic and could be mistaken for real, while unrealistic or minor edits do not trigger the same expectation (YouTube Help).

That shift matters because the consumer issue is not simply “AI was used.” The issue is whether AI makes advertising feel less trustworthy by obscuring how personal data is being used, what is real, and who remains accountable.

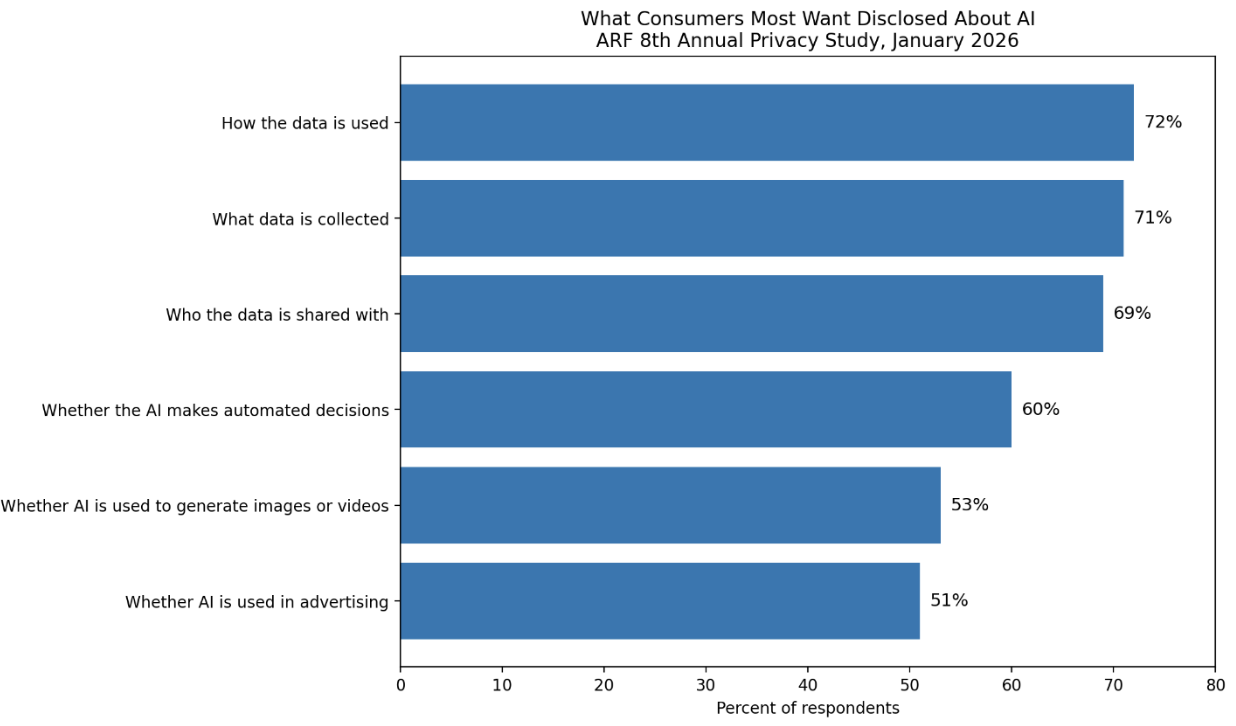

Recent research suggests that consumers do want AI disclosure, but not in a purely technical sense. In the ARF’s 8th Annual Privacy Study, based on 1,245 U.S. consumers, 62% said they were concerned or very concerned about AI’s impact on their privacy, and 85% said companies should be required to disclose when they use AI. But when asked what they most wanted disclosed, consumers prioritized data practices — how data is used (72%), what data is collected (71%), and who it is shared with (69%) — over whether AI makes automated decisions (60%) or whether AI is used in advertising at all (51%).

Humantel’s study reinforces that point, but only selectively. Its March 2026 survey of 4,047 U.S. consumers found that people are not exactly embracing AI: 42% said AI makes them nervous about the future, while only 25% said it will ultimately make life better for most people. That is less a signal of enthusiasm than of wary acceptance.

That skepticism becomes more concrete in advertising contexts. Humantel found that only 25% were comfortable with AI helping create the ads they see. And in AI-driven discovery, trust remains limited: among AI users, only 25% said AI gives results free from advertiser influence, compared with 37% for traditional search.

That distinction matters. Consumers are not asking for algorithm transparency. They are asking for the kind of transparency that helps them judge whether an experience is credible: how their data is being used, whether content is authentic, and whether someone is still accountable for what they are seeing. Transparency matters most when it addresses the actual source of distrust.

That helps explain why a risk-based disclosure model makes more sense than a universal “made with AI” label. If the real concern is misuse of data, fabricated realism, uncertainty about what is real or about hidden commercial influence, then a blanket label may create the appearance of transparency without addressing the actual source of distrust. What matters more is whether AI changes something meaningful about truthfulness, identity, or the handling of personal data.

For advertisers, the lesson is not that every use of AI needs a label. It is that disclosure should help consumers resolve the uncertainty AI creates. When AI changes the authenticity of an ad, the identity being represented, the role of personal data, or the source of accountability, transparency becomes meaningful. When disclosure merely says “AI was used,” it may satisfy a process requirement while leaving the real trust problem untouched.

Author

Tracy Adams, PhD

Senior Director of Research & Insights

ARF

SOURCES

Advertising Research Foundation. (2026, January). The ARF 8th annual privacy study. The ARF.

Humantel Media. (2026, March). AI in ads: Key takeaways.

Interactive Advertising Bureau. (2026, January 15). AI transparency and disclosure framework. IAB.

Pavón, P. (2025, February 3). Expanding GenAI transparency for Meta’s ads products. Meta Newsroom.

YouTube Help. (n.d.). Disclose use of altered or synthetic content. https://support.google.com/youtube/answer/14328491